From Static Lists to Threat Intelligence: Better Domain Detection in Elastic

A scalable approach to detecting malicious domains using Threat Intelligence and Indicator Match Rules

One of the most common techniques used in phishing and initial access campaigns is the creation of domains that closely resemble legitimate ones. Attackers exploit typosquatting, homograph attacks, and brand impersonation to deceive users and steal credentials.

For a Security Operations Center (SOC), detecting interactions with these domains can provide early visibility into phishing campaigns, credential harvesting attempts, or malicious infrastructure used during the early stages of an attack.

However, many detection strategies still rely on static rules with hardcoded domain lists, an approach that quickly becomes outdated and difficult to maintain.

In this article I want to explore how a detection use case can evolve from a static domain list approach to a Threat Intelligence-driven detection model using indicator match rules in Elastic.

The Problem: Static Similar Domain Detection

One SOC strategy might be to try to detect within an organization’s events suspicious domains similar to its legitimate ones, by creating static detection rules.

These rules may include:

- A list of known, similar domains generated using fuzzy matching techniques (e.g. Damerau-Levenshtein distance) and hardcoded directly inside the detection rule

Consider a simple example, where a company owns the legitimate domains:

company.com

company.netStatically listing all possible similar domains is not a realistically possible thing to do. So, our approach to date has been as follows: create a query that, through the fuzzy technique, will match possible similar domains:

url.domain :

(

company.com ~ 3 OR

company.net ~ 3

)

AND NOT url.domain:

(

company.com OR

company.net

)

In the case above the logic of detection is as follows: the query uses the Damerau-Levenshtein distance to find all similar terms with a maximum of n changes (in this case 3 – where the choice of number must be a trade off between effectiveness and computational efficiency).

The logical operator AND NOT is used to exclude and filter out the similar domains considered as such by fuzzy logic, from the truly lawful domains – for example company.com and company.net .

Thus, the rule is triggered whenever a host within the organization interacts with one of these similar domains flagged by fuzzy logic, for example company.org or conpany.com.

So it should be obvious how necessary it is to embed the customer’s domain list directly inside the detection rule – and that list can be longer or shorter depending on the number of lawful domains associated with the company and that we want to monitor.

While this approach may initially appear effective, it introduces several structural limitations.

Limitations of Static Detection Rules

The main issue is that domain impersonation is a dynamic problem, while (in the case under analysis) the detection rule remains static. Several challenges quickly emerge.

Lack of Continuous Updates

Clearly, the list of domains to be monitored and verified by fuzzy queries can vary over time, company domain being decommissioned, or new domain acquired.

For example:

company-secure-login.com

company-verify365.com

company-sso-login.net

These domains will not be monitored unless the rule is manually updated.

Maintenance Overhead and Limited Scalability

SOC engineers must periodically:

- Regenerate fuzzy domain lists

- Update detection rules

- (Possibly) redeploy them

Over time this creates maintenance fatigue and increases operational overhead. The static list quickly becomes difficult to manage.

A rule created months earlier may contain outdated indicators and miss new domains actively used.

In other words:

A static rule is only a snapshot of the company environment.

A Better Approach: Threat Intelligence Driven Detection

Instead of embedding domain lists directly in detection rules, a more scalable approach is to rely on Threat Intelligence indicators.

The core idea is simple: detection rules should contain logic, not static data.

The indicator data should instead be provided, maintained and updated by the Threat Intelligence platform.

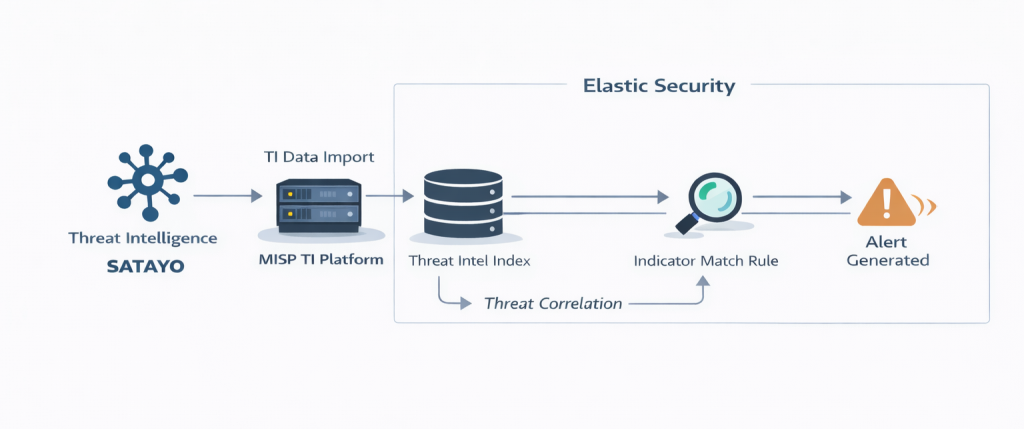

Architecture Overview

In this implementation, suspicious or lookalike domains are identified through the Threat Intelligence service provided by SATAYO. These indicators are then centralized and managed using MISP before being consumed by the detection platform.

Workflow:

- Threat Intelligence (via the SATAYO platform) identifies suspicious domains

- Domains are imported and stored as indicators in MISP

- Indicators are ingested into Elastic Security

- Detection rules correlate telemetry with the threat indicator dataset

This model enables dynamic detection that automatically evolves with the threat landscape and the real evidence found by SOC analysts during analysis.

Threat Intelligence Indicator Example

In our case, suspicious or similar domains are identified and collected in SATAYO, which provides intelligence related to potentially malicious domains.

Once identified, these domains are imported into MISP where they are stored as indicators and enriched with additional context.

The indicators are then periodically ingested into Elastic Security and stored in the threat intelligence index, where they can be correlated with network telemetry through indicator match rules.

Example document stored in Elastic:

threat.indicator.type: domain-name

misp.attribute.type: domain

misp.event.id: 99999

misp.event.info: conpany.com

threat.feed.name: MISP

threat.indicator.marking.tlp: AMBERElastic Indicator Match Detection Rule

Instead of matching against a static list, in Elastic we can correlate events with Threat Intelligence indicators.

Example detection logic

# Custom query (KQL)

event.category:network and url.domain:*The actual correlation is done through the configuration of the matching indicator. Then any matches are checked between the evidence collected by SIEM and the IoCs provided by the TI platform and indexed in Elastic.

# Indicator index query

@timestamp >= "now-90d/d" and event.module:(threatintel or ti_*) and threat.indicator.type:domain-name and threat.indicator.url.domain:* and not labels.is_ioc_transform_source:"true" and misp.attribute.to_ids:"true"

# Indicator mapping

url.domain MATCHES threat.indicator.url.domainExample Threat Match Rule

Below is a simplified example of a detection rule using indicator matching.

{

"name": "Threat Intel Domain Indicator Match",

"description": "This rule is triggered when a domain indicator from the Threat Intel Filebeat module or integrations has a match against an event that contains domain data, like DNS request, HTTP network log, etc. This rule limits matches to indicators where the MISP attribute to_ids is true, ensuring IOC relevance for detection systems.",

"risk_score": 73,

"severity": "high",

"type": "threat_match",

"language": "kuery",

"index": [

"auditbeat-*",

"filebeat-*",

"logs-*",

"packetbeat-*",

"winlogbeat-*"

],

"query": "event.category:network and url.domain:*",

"threat_filters": [

{

"query": {

"match_phrase": {

"event.category": "threat"

}

}

},

{

"query": {

"match_phrase": {

"event.kind": "enrichment"

}

}

},

{

"query": {

"match_phrase": {

"event.type": "indicator"

}

}

}

],

"threat_query": "@timestamp >= \"now-90d/d\" and event.module:(threatintel or ti_*) and threat.indicator.type:domain-name and threat.indicator.url.domain:* and not labels.is_ioc_transform_source:\"true\" and misp.attribute.to_ids:\"true\"",

"threat_mapping": [

{

"entries": [

{

"field": "url.domain",

"type": "mapping",

"value": "threat.indicator.url.domain",

"negate": false

}

]

}

],

"threat_language": "kuery",

"threat_index": [

"filebeat-*",

"logs-ti_*"

],

"threat_indicator_path": "threat.indicator"

}

This rule triggers whenever:

url.domain == threat.indicator.url.domain

If a new domain is detected, analyzed and considered potentially malicious, it will be added and then the rule automatically detects it without any query modification.

Simulated Detection Scenario

Let’s consider a realistic scenario.

An attacker registers a domain that hosts a phishing page targeting corporate users: conpany-secure-login.com

Threat Intelligence analysts discover the domain and add it to MISP; the indicator is automatically ingested in Elastic.

Next, an employee clicks on a phishing link and his workstation attempts to connect: https://conpany-secure-login.com

In Elastic I expect to receive a related event (clearly if the correct integration is set up and if such events are collected by SIEM – but this is off topic for the purposes of the article 🙂 ):

url.domain: conpany-secure-login.com

host.name: workstation-124

event.category: networkThe indicator match rule correlates the event with the IoC provided by MISP, and an SOC alert is then triggered:

ALERT

Threat Intel Domain Indicator MatchThe SOC analyst now immediately sees:

- The affected host

- The suspicious domain

- The Threat Intelligence context (associated tags and campaign metadata)

- The indicator confidence level

All without having to change the detection rule each time by keeping it up-to-date with business domains and, most importantly, in multi-tenant contexts, without the need to create N custom rules for each client.

Detection Engineering Insights

From a Detection Engineering perspective, this use case highlights the difference between static detection models and Threat Intelligence-driven detection.

In the static detection model the detection rule contains both detection logic and indicators (domain list):

url.domain: (

company.com ~ 3,

company-secure-login.com ~ 3,

company.com ~ 3,

company-verify365.com ~ 3

)The weak points include: fragile rules, manual updates, customization and detection degradation over time.

With the improved model, the rule contains only the correlation logic

url.domain == threat.indicator.url.domainThreat data is instead maintained and provided by the Threat Intelligence platform and correlated in Elastic through indicator match rules.

This separation enables:

- Indicator updates and expiration

- Confidence scoring

- Enrichment workflows

Detection Lifecycle Improvements

Within the detection engineering lifecycle, this model improves several phases.

| Phase | Benefit |

|---|---|

| Detection Development | Simpler rules |

| Maintenance | Automatic updates |

| Threat Coverage | Continuously updated indicators |

| Scalability | Multi-tenant (i.e., multiple customers) |

Detection rules should be designed to remain stable over time, while the data they operate on should remain dynamic.

By following this principle, SOC teams can build detection capabilities that are more scalable, resilient, and easier to maintain .

Conclusion

Detecting interactions with suspicious domains is a critical capability for identifying phishing campaigns and early-stage attack infrastructure. However, traditional detection strategies based on static domain lists quickly become outdated and difficult to maintain.

By integrating a Threat Intelligence platform such as MISP with indicator matching capabilities in Elastic Security, organizations can transform this use case into a dynamic and scalable detection mechanism.

Instead of embedding indicators directly inside detection rules, the detection logic simply correlates telemetry with intelligence data maintained externally.

This architectural change provides several benefits:

- Continuously updated indicators

- Reduced rule maintenance

- Improved scalability across multiple customers

- Stronger alignment between Threat Intelligence and Detection Engineering

Ultimately, this approach allows detection rules to remain stable over time , while the intelligence that drives them continues to evolve alongside the threat landscape.

References

https://en.wikipedia.org/wiki/Damerau%E2%80%93Levenshtein_distance

https://www.elastic.co/blog/found-fuzzy-search