By default all metrics in InfluxDB are stored forever, but for certain metrics we need to store them for a much shorter time span. One example is when we’re receiving very large amounts of raw data, when we’re much more interested in derived characteristics of that raw data.

In this blog we’ll discuss how to create additional retention policies on InfluxDB, and also how to route metrics to the proper policy from the Telegraf consumer.

Use Case

On my AX2012 environment I want to route certain metrics to a specific retention policy, in order to retain them for a shorter time span. These metrics are from AXTrace Agent.

Creating a Retention Policy in InfluxDB

By default we have only 1 retention policy called ‘autogen’. In order to retain our AX metrics for different time spans we need to create 2 separate retention policies:

The first one will collect all the raw metrics from AXTrace agent, while the second one will store the most important metrics that we want to retain longer. These metrics are calculated using the continuous query. We’ll discuss later in more detail why this retention policy is needed.

Default AXTrace metrics:

CREATE RETENTION POLICY "etwrp" ON "<DBName>" DURATION 2w REPLICATION 1 shard duration 24hAggregated AXTrace metrics:

CREATE RETENTION POLICY "etwrpext" ON "<DBName>" DURATION 26w REPLICATION 1 shard duration 168h To connect to the InfluxDB databases you can run this command:

influx -host influxdb.neteyelocal -ssl -username <user> –password <passwd>

And then ‘use <DBName>’ to select the DB.

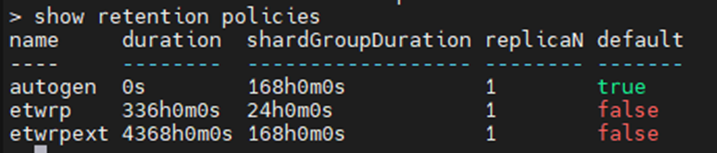

When you run the command ‘show retention policies’ you should see something like this:

Routing the Metrics to the Right Retention Policy

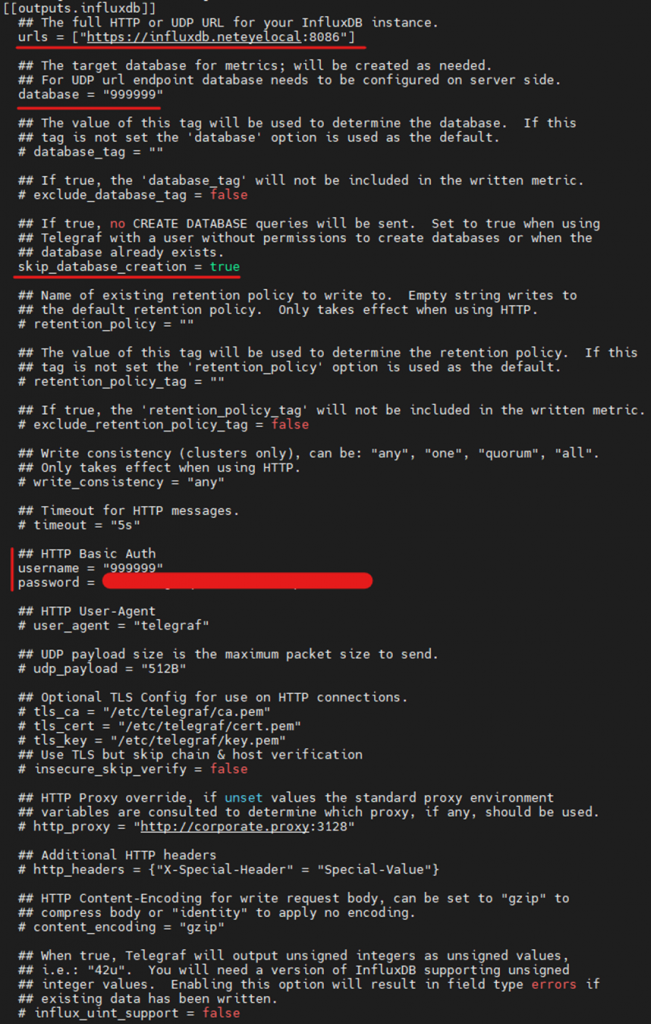

On your NetEye master at /neteye/local/telegraf/conf you will find the various Telegraf consumers. These services listen on the NATS stream for the metrics for your tenant, and then write them out to InfluxDB. By default all of a tenant’s metrics are written with the default retention policy, so we’ll need to change that.

NB: If you are using a NetEye Satellite, every time you launch ‘neteye satellite config create’ this file will be overwritten!

Default file:

As you can see there are no references at all to a retention policy. So we need to delete the whole ‘[[outputs.influxdb]]’ block and substitute it with this one:

...

[[outputs.influxdb]]

#This is the default output, but ignores the AxTracer metrics

namedrop = ["ETW"]

urls = ["https://influxdb.neteyelocal:8086"]

database = "< DBName >"

retention_policy = ""

skip_database_creation = true

username = "<DBName>"

password = ""

[[outputs.influxdb]]

#This output will write the AXTracer metrics on my new retention etwrp

namepass = ["ETW"]

urls = ["https://influxdb.neteyelocal:8086"]

database = "< DBName >"

retention_policy = "etwrp"

user_agent = "telegraf-etwrp"

skip_database_creation = true

username = "< DBName >"

password = ""

...

You must be wondering at this point why there is no output block for the retention policy etwrpext. As mentioned earlier, this retention policy will be used by the continuous query. These metrics should be aggregated and published with the etwrpext retention policy in order to have more useful data stored for a much longer time period.

To recap: etwrp retention has the raw data, and etwrpext has the complex refined data

To use the retention policy, connect to your InfluxDB and run this query. You’ll have to substitute <DBName> with your database name.

Here’s an example for RPC_server_duration’s metrics aggregation:

CREATE CONTINUOUS QUERY ETWreduce ON "<tenant ID>" RESAMPLE EVERY 10m FOR 15m BEGIN SELECT sum(RPC_server_duration) AS RPC_server_duration_sum, min(RPC_server_duration) AS RPC_server_duration_min, max(RPC_server_duration) AS RPC_server_duration_max INTO "<tenant ID>".etwrpext.ETW_stats FROM "<tenant ID>".etwrp.ETW GROUP BY time(1m), AxInstance, AxUser, host ENDConclusion

Now we don’t have to worry about our database getting full from all the raw AXTracer data. All AXTracer metrics older than 2 weeks will be flushed, while the aggregated data will be stored for 6 months.

Source

Telegraf influxdb output

Downsampling and data retention in InfluxDB

Filtering metrics on Telegraf

These Solutions are Engineered by Humans

Are you passionate about performance metrics or other modern IT challenges? Do you have the experience to drive solutions like the one above? Our customers often present us with problems that need customized solutions. In fact, we’re currently hiring for roles just like this as well as other roles here at Würth Phoenix.